This post has been edited for better accuracy.

Lately, we have finally, and for real, entered a sci-fi era in the sense that we can converse with artificial intelligence every day. This is no Utopia, though—wars are still very much with us, and our AIs are not the all-logical, all-subservient entities like Star Trek would have us believe.

Classic sci-fi used two distinct tropes when they predicted artificial intelligence (and today I will ignore the AI-had-enough-and-rebelled-and-put-us-down kind of stories). In Asimov’s Robot stories, especially the crime mysteries with Plainclothesman Elijah Baley, they are purely deterministic and logical—so much so that the investigations themselves revolve around explaining the logic of a robot’s unforeseen behavior. With Asimov’s robots, everything can be reduced to logical or procedural programming.

Others, like A. C. Clarke in the 2001, imagined AIs to be perfect models of the human intelligence and personality, with computers even developing human-like mental health problems (take HAL’s paranoia). Star Wars took this approach and created a parody of it—come on, what kind of universe has astromech droids that can be cowards?

Classic sci-fi also imagines AIs in a way that, when they reach a certain level of sophistication, they develop the ambition to become increasingly human-like. Examples include Daneel Olivaw in the Robots/Foundation saga, Andrew in Bicentennial Man, Lt. Cmdr. Data in Star Trek, or Rachael Rosen in Do Androids Dream of Electric Sheep? (I’m one of those renegades who reveled in reading the book but could not enjoy the Blade Runner movies.)

DALL-E and the other neural image generators are none of the above. Neither are any of the other AIs we have dealings with. They are not logical, they do not resemble human intelligence or personality, and they surely don’t have ambition. They don’t have a lot of behavior to begin with.

A modern neural AI has a compulsion to behave in one certain way. By and large, they do one thing: generate a response to a prompt from a user. This is the case no matter whether the AI makes a choice, convert the input into something else (that would be machine translation, for example), generate a response using its large language model (and image model if it’s an image generator). They have no power to change this. ChatGPT or GPT-4 will not choose not to give you an answer and DALL-E will try to depict even the most outlandish ideas. (Unless, of course, your input violates OpenAI’s content guidelines, but that won’t be decided by the AI itself.)

DALL-E and the other image generators empower people to visualize their ideas in ways that were not possible before—provided you can give them the right kind of prompt. Social media is now rife with advertisements of trainers and consultants of “prompt engineering”.

This is not necessarily a good thing. There is talk of how this kind of empowerment takes the objectification of certain groups of people to a whole new level—and we should not forget that the use of generative AI consumes an enormous amount of energy, and probably contributes more to global warming at present than the value it creates. This will probably change quite soon, though.

That said, I have found myself guilty of wanting to experiment with it, and I also had to do it for work. And I decided to go to the extreme and use just about any thought and figure of speech as a prompt.

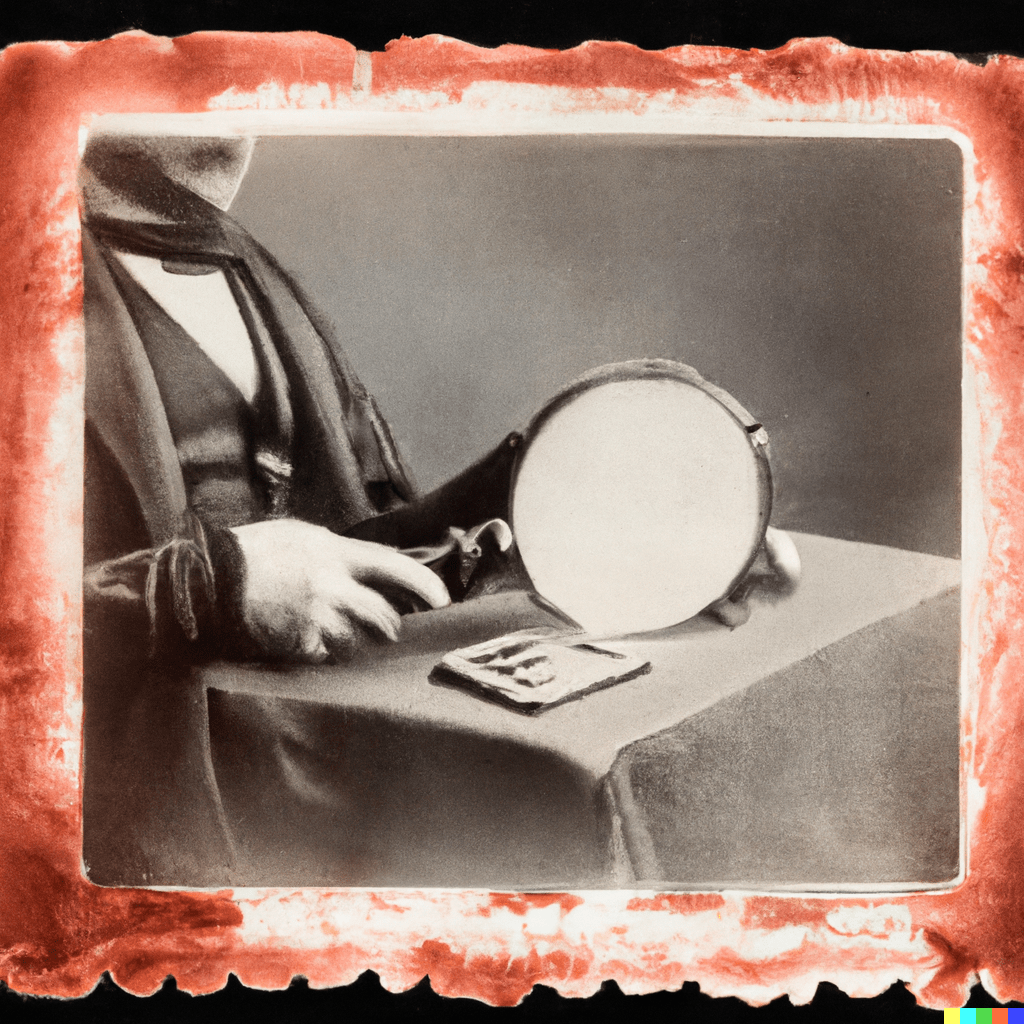

For example, the other day we were talking to our helpdesk manager and he mentioned an “issue that was mysteriously resolved”. In a few minutes, I asked DALL-E to create “a daguerreotype of an issue that was miraculously and mysteriously fixed”. DALL-E didn’t flinch but produced this (and three others):

The more classic sci-fi you read, the less would you expect an AI, supposed to be rationalistic and logical, to be able to draw or visualize abstract concepts. In DALL-E’s defense, it had no idea what it was doing, simply because it has no ideas whatsoever. It has data and a mathematical model of connecting them. But, and this is important, that isn’t a claim that it isn’t intelligent. It is, but it is also completely alien.

Another thing you wouldn’t expect is that—both DALL-E and ChatGPT have a peculiar relationship to logic and numbers, and the anatomy of living things. A few weeks ago I met a GPT-3 instance that could not, for the life of it (which it has not), continue a Fibonacci sequence, regardless of how many members I listed in the prompt.

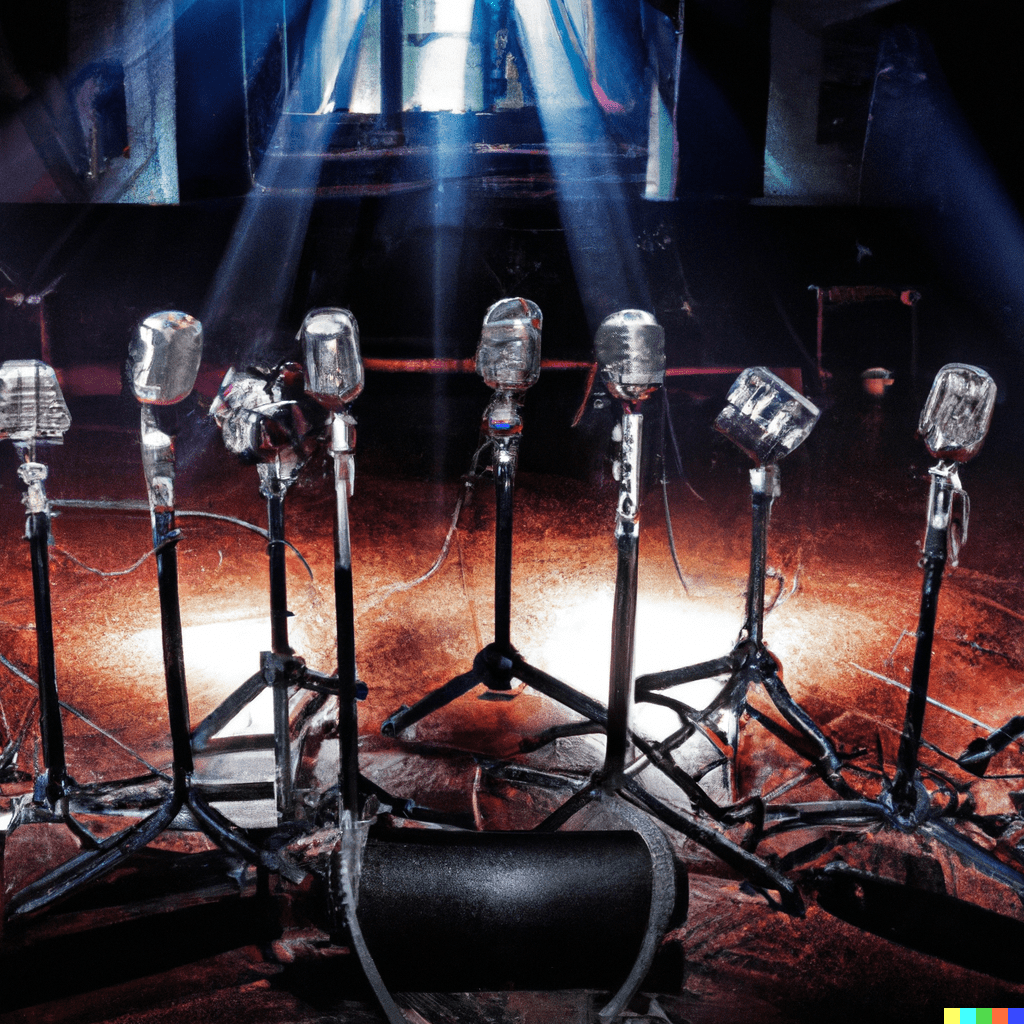

When I asked DALL-E to create a picture that has 5 microphones, it gave me either 3 or 8:

And when I requested a photo of a robot walking three corgis, either the robot also became a corgi, or I got a corgi that was walking two robots:

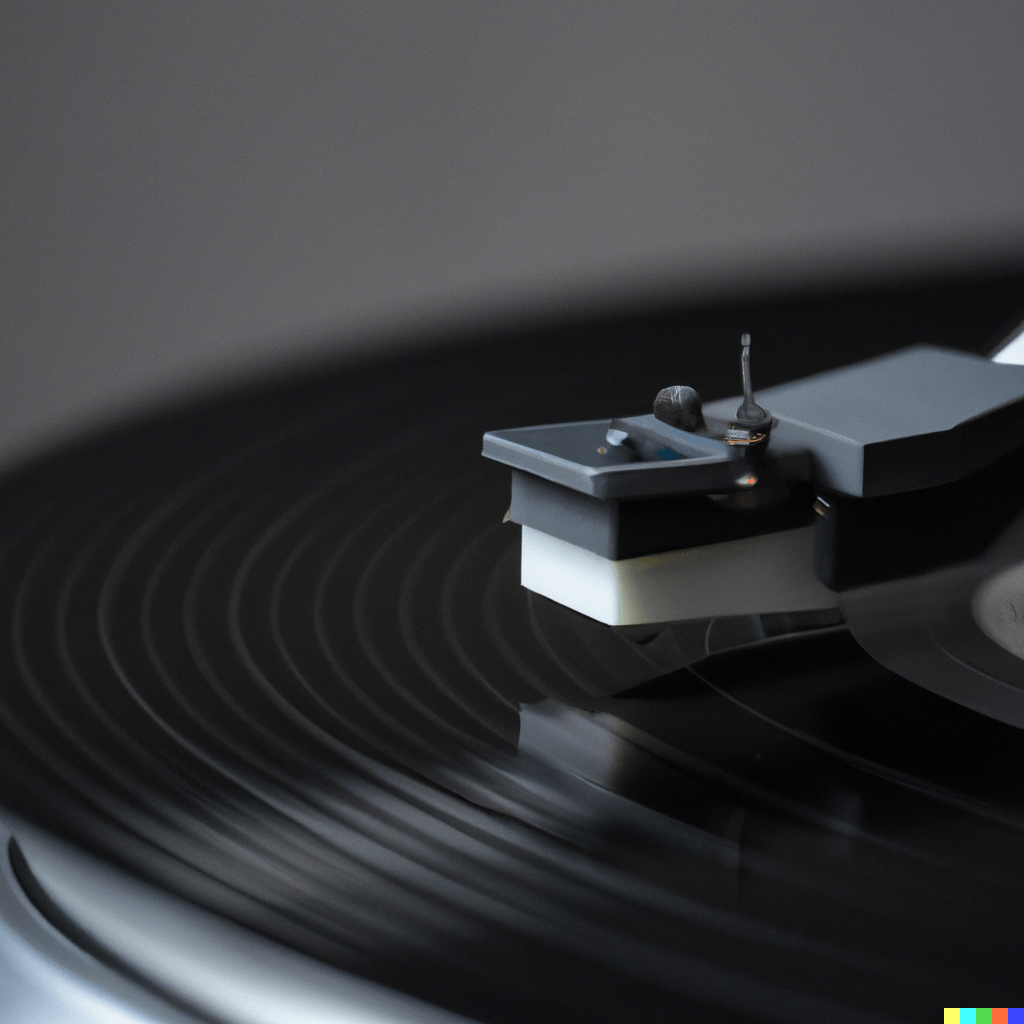

This image of a turntable shows that it hasn’t got the slightest idea of the function of objects (to its defense, the four tries included one picture that got it more or less right):

And it will, without any scruples, draw a human figure with seven fingers and no thumb on a hand.

So, here we are, conversing with an intelligence that shares absolutely none of our experiences, and sees the world in a completely different light than we do. In the SETI project, Carl Sagan and team assumed that the communication with an extraterrestrial intelligence begins with references to universals such as mathematical constants and axioms. A few decades later, we humans created a kind of intelligence that is completely unapproachable that way. And it also does not have any sentiments that we can recognize.

DALL-E truly is the perfect alien.